Why Expertise Becomes a Liability

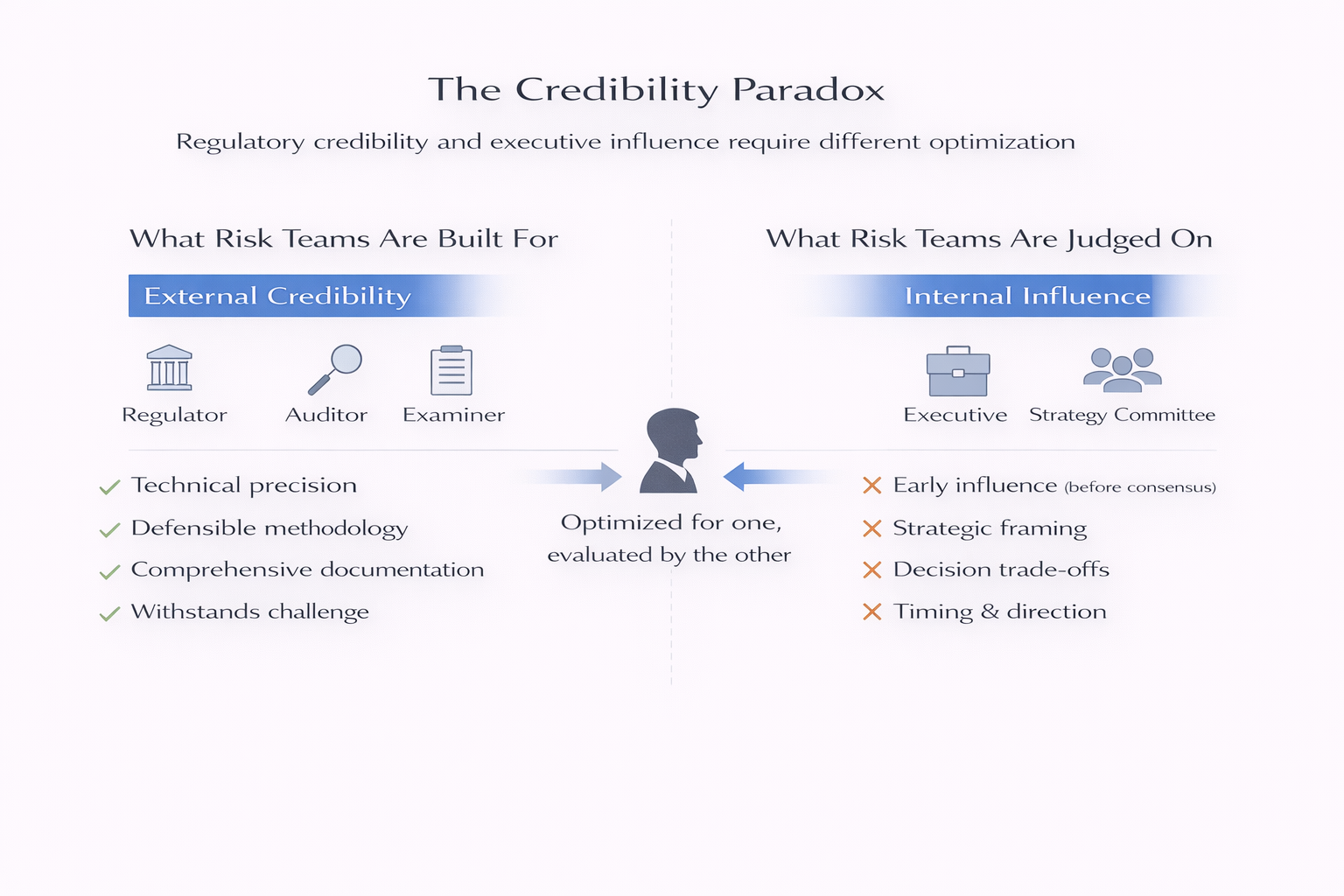

Part 3 - Risk teams are hired for regulatory credibility—technical depth that withstands external scrutiny. They're judged on executive influence—arriving early enough to shape decisions before consensus forms. These require different optimization, but organizations assume they're interchangeable.

Risk Judgment Series

How Risk Teams Actually Fail

Risk functions are designed with sound logic—independence, expertise, board access. Then reality surfaces predictable failure patterns. This series examines how organizational structure, not incompetence, systematically undermines risk teams. Part 3 of 6.

The risk professional sees the problem clearly. The exposure is real, the trend is concerning, the analysis is sound.

They explain it with the precision their training demands: quantitative assumptions, dependencies, and distributions. Language that ensures technical accuracy. Language that regulators would recognize as credible.

The executive hears complexity without direction. Uncertainty without trade-offs. Analysis that doesn’t connect to the decision the committee came to discuss.

The expert finishes presenting. The CFO nods politely. Someone suggests “let’s circle back on this.” The conversation moves to the next agenda item.

The decision was already half-made when the expert spoke.

This isn’t about poor communication skills or anti-intellectual executives. It’s about optimization mismatch. Risk teams are hired for one form of credibility—regulatory and technical. They’re judged on another—strategic influence with decision-makers.

And organizations never reconcile the difference.

I’ve watched this pattern play out across industries and organizational types. The failure is structural, not personal.

The Credibility Logic

Organizations build risk functions with external credibility in mind. Regulators expect technical rigor. Auditors expect defensible methodologies. Board members—at least initially—expect credentials that signal competence to outside scrutiny.

So organizations hire for regulatory credibility. Advanced degrees in quantitative fields. Professional certifications. Experience navigating complex regulatory frameworks. People who can demonstrate technical depth when challenged by examiners or audit committees.

The logic is sound. Regulatory failures are expensive, visible, and attributable. Building a function that can withstand external scrutiny matters.

But credibility with regulators isn’t the same as influence with executives. The first requires demonstrating technical precision and methodological rigor. The second requires arriving early enough to shape decisions before informal consensus forms—and communicating in ways that connect analysis to strategic timing and trade-offs.

Organizations assume one automatically produces the other. The hiring priorities ensure it won’t.

The Audience Mismatch

Risk teams optimize their work for the audiences who evaluate them most directly: regulators, auditors, external examiners. These audiences value precision, defensibility, and comprehensive documentation.

An analysis presented to a regulator succeeds when it demonstrates rigorous methodology and complete consideration of technical factors. Ambiguity is weakness. Qualification is thoroughness. The goal is to prove the risk function’s work can withstand technical challenge.

The same analysis presented to an executive committee operates in a different evaluative environment. Executives aren’t evaluating technical rigor—they’re making resource allocation decisions under time pressure with incomplete information. They need direction, not precision. Trade-offs, not probabilities. Connection to strategic timing, not methodological completeness.

I’ve watched risk professionals present analysis that would satisfy any regulatory review and see executive attention drift after three minutes. Not because executives are impatient or anti-intellectual—because the analysis is optimized for an audience that isn’t in the room.

The risk professional is being technically excellent for regulators while executives are trying to decide whether to enter a new market by quarter-end.

The Power Dynamic

Technical expertise doesn’t just speak a different language than executive decision-making. It arrives without the structural positioning that creates influence.

Executives making strategic decisions are already embedded in informal networks where preferences form, coalitions build, and directions emerge before formal meetings happen. Strategy gets shaped through ongoing dialogue, corridor conversations, and iterative testing of ideas with peers. By the time risk arrives with analysis, the real decision has already happened - private, in fragments, across five conversations that will never be minuted.

Risk functions enter this process as external evaluators. They arrive with analysis, not participation in the informal consensus-building. They have technical assessments, not ownership of the decision. They can identify concerns, but they can’t sponsor solutions or commit resources.

This isn’t about communication style. It’s about structural positioning. Even perfectly clear analysis doesn’t create influence when it arrives after informal direction has been set and comes from a function without decision rights or resource control.

Expertise competes with power, not ignorance. The executive who’s been shaping the strategic direction for weeks isn’t dismissing the risk analysis because they don’t understand it. They’re weighing it against momentum, coalition dynamics, and their own judgment about what’s achievable.

The risk professional has technical credibility. The executive has decision authority and strategic context. When those compete, authority wins—not because it’s right, but because that’s how organizational power works.

"Expertise competes with power, not ignorance.”

The Credibility Paradox

Organizations hire risk functions for regulatory credibility—technical depth that withstands external scrutiny. They judge them on executive influence—arriving early enough to shape decisions before consensus forms. These require different optimization, but organizations assume they're interchangeable.

The Consequence

The consequence isn’t that technical expertise becomes irrelevant. It’s that it becomes marginalized through a specific perception: academic detachment.

I’ve watched brilliant risk professionals—people with genuine insight into emerging patterns—get characterized as “too theoretical” or “risk averse” or “not understanding the business context.” Not because their analysis is wrong, but because the way they communicate signals distance from strategic reality.

When you explain a concern through distributional analysis while executives are debating market timing, you sound disconnected from the actual decision. When you quantify uncertainty while the team is trying to build conviction for a bold move, you sound like you’re undermining momentum rather than informing judgment.

The expertise is real. The perception of detachment is also real. And once a risk function gets labeled as academic or overly cautious, technical rigor becomes evidence of the problem rather than the solution.

Risk teams find themselves in a trap. They were hired for technical credibility with external audiences. They get evaluated on strategic influence with internal decision-makers. When they optimize for the first, they systematically underperform on the second.

And the organization blames the individuals rather than the design that created the mismatch.

📌 Key Takeaways:

- 1️⃣ Regulatory credibility ≠ executive influence. The first requires technical precision for external scrutiny. The second requires arriving early enough to shape informal consensus.

- 2️⃣ Risk teams optimize for the audiences who evaluate them directly. Regulators and auditors value defensibility. Executives need direction and timing.

- 3️⃣ Expertise competes with power, not ignorance. Clear analysis doesn't create influence when it arrives without structural positioning or decision rights.

- 4️⃣ Marginalization happens through perception. Technical rigor gets labeled "academic detachment" when it's optimized for regulatory review but applied to strategic decisions.

The Pattern Isn’t Fixable Through Better Communication Training

The pattern is structural, not fixable through “better communication training” or executive education on technical concepts. When organizations hire for regulatory credibility and judge for executive influence, they’ve engineered a mismatch they then blame on individuals.

Risk teams aren’t bad at communication. They’re excellent at communicating to the audience they were built for—regulators, auditors, technical reviewers who value precision and defensibility.

Executives need something different. Not simpler—sequenced for decisions. Strategic framing, decision trade-offs, narrative that connects to timing and direction.

The organization assumed one form of expertise would automatically translate to the other. The hiring priorities ensured it wouldn’t.

When the risk function struggles to influence strategy, it’s not because experts failed to translate. It’s because translation was never the actual problem.

They were optimized for the wrong audience from the start.

Frequently Asked Questions

For readers seeking quick answers to common questions about expertise, influence, and the credibility paradox:

Don’t risk functions need technical expertise to be credible with regulators and boards?

Yes—but regulatory credibility and executive influence are different forms of authority that require different optimization. Organizations hire for the first, judge performance on the second, and then blame individuals when technical expertise doesn’t automatically translate to strategic influence.

Can’t risk professionals simply adapt their communication style for different audiences?

This frames the problem as individual communication skill when it’s actually structural positioning. Risk functions arrive at strategic discussions after informal consensus has formed, without decision rights or resource control. Even perfectly clear analysis doesn’t create influence when expertise competes with established power dynamics.

Is this pattern unique to highly technical risk functions?

No. I’ve observed it wherever organizations hire specialists for external credibility (legal, compliance, technical functions) and then expect those specialists to influence internal strategy. The mechanism—optimization for one audience, evaluation by another—operates across functions.

What signals indicate a risk function is experiencing this pattern?

Risk professionals being praised for regulatory readiness while being excluded from early strategic discussions. Expertise getting characterized as “too theoretical” or “risk averse.” Analysis that passes technical review but doesn’t influence decisions. Functions that succeed with external audiences (regulators, auditors) while struggling with internal influence.

Continue the Series

Don't Miss the Next Post

This is Part 3 of a 6-part series examining how risk teams fail through structural design, not incompetence.

Next: How risk functions drown in their own output—and why expanding headcount makes the problem worse, not better.

New posts every week.

Related Reading

- When Independence Becomes Isolation: How the structural separation designed to empower risk teams systematically cuts them off from the early, messy signals they need.

- The Compliance Trap: Why risk teams become reporting factories when asymmetric incentives drive optimization toward compliance over strategic insight.

Regulatory credibility and executive influence aren’t the same currency. Organizations hire for one and expect the other—then wonder why the exchange rate is broken.