How Incentives Quietly Shape What Gets Taken Seriously

Part 5 - How organizational incentives filter which risks become urgent through quiet alignment with leadership reward structures and performance metrics.

🌟 RISK JUDGMENT SERIES: When Risk Stops Behaving — Part 5 of 8

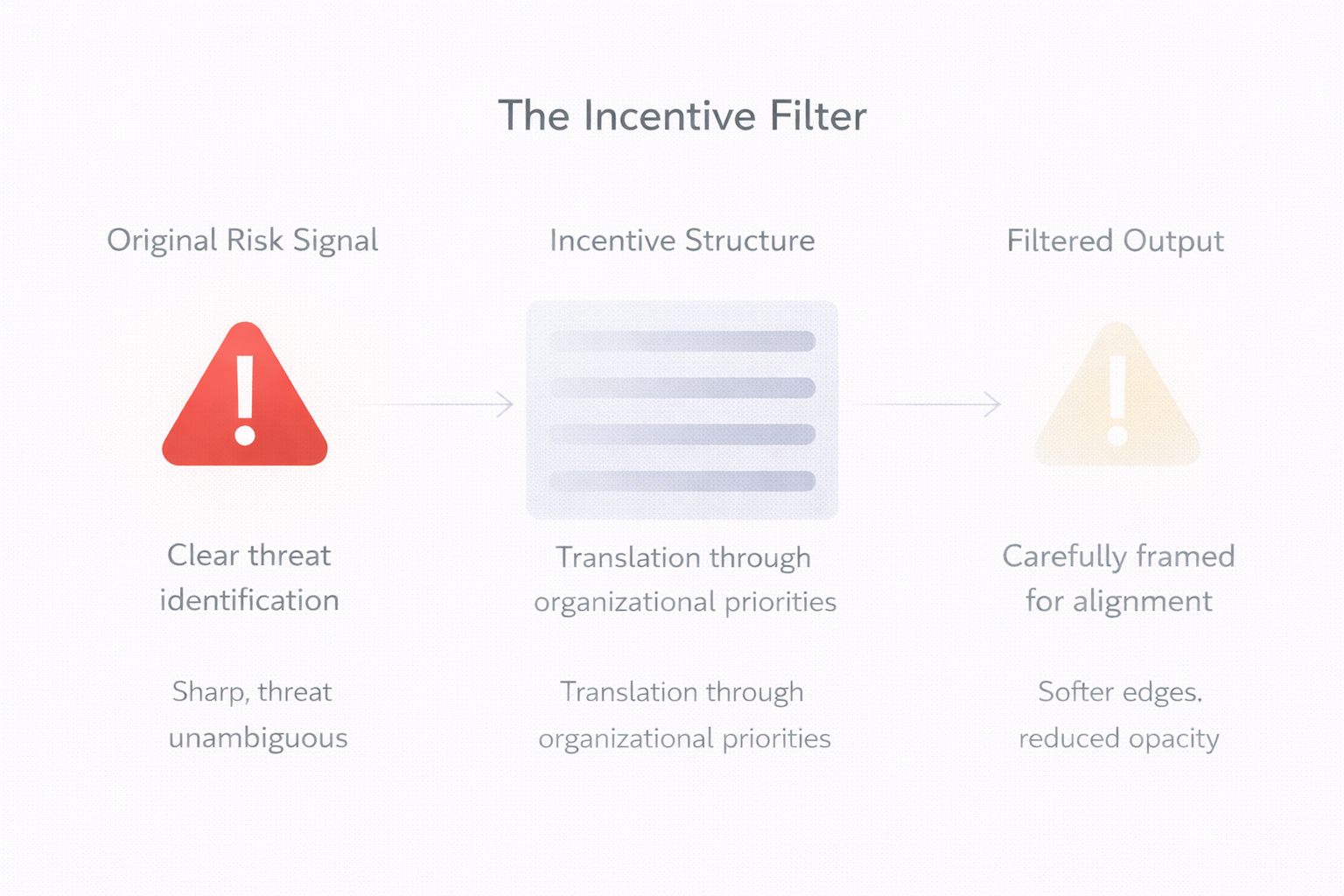

We've examined how silence becomes rational, decisions lose custody, governance displaces judgment, and escalation erodes. This post reveals the mechanism driving all these patterns: how incentives filter what can be taken seriously—not through active suppression, but through quiet alignment with what gets rewarded.

The posts that follow explore how experience becomes a blindfold, how judgment gets outsourced, and why urgency arrives too late. Together, they show how organizational failure emerges from structure, not intent.

I've watched two risks sit side-by-side on the same risk register for eighteen months.

Both were rated amber. Both had similar potential impact. Both had been escalated multiple times.

One disappeared from the register within three months—budget allocated, owner assigned, action taken.

The other is still there. Still amber. Still discussed. Still somehow never quite urgent enough.

The difference wasn't evidence. Both risks were well-documented, clearly articulated, backed by data.

The difference was collision.

One risk threatened a metric that leadership was measured on. The other threatened something important—but not rewarded.

We've already seen how silence becomes rational, how decisions lose custody, how governance displaces judgment, and how escalation erodes. What drives all these patterns?

Incentives—operating beneath the surface, shaping what can be taken seriously.

Organizations don't respond to danger in the abstract. They respond to what collides with what they reward.

In practice, incentives—not intent—decide what becomes urgent.

This isn't about risk. It's about truth under constraint.

How Incentives Filter Truth

In most organizations, truth isn't suppressed. It's translated.

Reframed into language that keeps it accurate—but safe.

I once watched a risk assessment move through multiple review cycles. The substance didn't change—the threat was the same, the data identical. But the language evolved with each pass:

Early version: "This could significantly affect our operating environment"

Next iteration: "This presents challenges to current approaches"

Later revision: "This represents an area for consideration"

Penultimate draft: "This warrants continued observation"

Final version: "This has been noted for monitoring"

Each translation was technically accurate. Each made the risk slightly more abstract, slightly more distant, slightly easier to defer.

By the final version, the risk was still present—but it had been filtered through language that prevented it from landing with force.

"That's how incentives work. They don't reject truth. They shape how truth can be expressed until it no longer collides with what's rewarded."

The risk becomes "emerging" instead of current. "Outside core focus" instead of central. "Better suited for a future phase" instead of now.

All technically correct. All functionally deflective.

How Seriousness Shifts With Incentives

Organizations don't always ignore misaligned risks. Sometimes they acknowledge them fully—and still don't act.

The pattern works in two directions:

When incentives don't support action, risks get priced. Leadership acknowledges the exposure, understands the implications, agrees it's material. Then comes the careful inventory: structural complexity, resource implications, timing considerations, stakeholder sensitivities.

The risk doesn't get rejected—it gets weighed against the cost of addressing it. And when that cost exceeds the cost of carrying it, nothing moves.

When incentives shift to support action, the same risks that seemed impractical become urgent. Resource constraints that felt insurmountable dissolve. Timing that wasn't right becomes essential.

Not because the risk changed—because where it sits relative to what's rewarded changed.

"The same risk, the same data, entirely different urgency depending on alignment with reward structures."

This is the clearest evidence that seriousness is negotiated through incentives: the same risk, the same data, entirely different urgency depending on alignment with reward structures.

How Escalation Gets Redirected

There's another pattern worth noting: when risks don't align with incentives, they don't get dismissed—they get redirected.

Someone raises a structural concern. Leadership engages seriously. Questions follow—but not the ones that address the core issue.

Instead: "What's the broader context?" "Have we seen this elsewhere?" "What additional data would help?" "Can you come back with deeper analysis?"

The risk gets acknowledged. Action items multiply.

But the action isn't decision—it's further examination. The risk becomes a research problem rather than a judgment call.

The escalation succeeded in generating engagement. It failed in generating ownership.

What was meant to trigger decision instead triggered workload—for the person raising it, not for the people meant to address it.

The risk remains visible. It's just been converted into process.

📌 Key Takeaways:

- 1️⃣ Organizations respond to what collides with what they reward—not to danger in the abstract

- 2️⃣ Truth doesn't get suppressed—it gets translated through language that keeps it accurate but safe

- 3️⃣ When incentives don't support action, risks get priced; when incentives shift, the same risks become urgent

- 4️⃣ Escalation that doesn't align with incentives gets redirected—converted into process rather than decision

- 5️⃣ The question shaping decisions is rarely "Is this risk real?" but "Is this risk survivable given current incentives?"

Why This Feels Rational From The Inside

This filtering and redirection doesn't feel like avoidance to people inside the system.

It feels like judgment.

People are managing real constraints: resource limitations, competing priorities, stakeholder relationships, strategic commitments already made.

They're balancing signal against stability. Truth against timing.

And the system has taught them which truths can move—and which must wait.

A risk that threatens a tracked metric gets immediate attention because raising it aligns with incentives. You're protecting what the organization values and measures.

A risk that threatens something unmeasured gets careful framing because raising it misaligns with incentives. You're asking the organization to care about something it doesn't reward caring about.

The system doesn't reward disruption. It rewards discernment.

And discernment, over time, becomes the ability to recognize which truths can move—and which must wait.

Truth Under Constraint

In every organization, there are truths everyone recognizes—but few feel authorized to act on.

Not because they're false. But because acting on them would collide with what is currently rewarded.

And the question that quietly shapes decision-making is rarely:

"Is this risk real?"

It's:

"Is this risk survivable—given how things work right now?"

That's truth under constraint. And it's the mechanism beneath every pattern we've examined in this series.

When incentives filter what can be taken seriously, organizations don't lack awareness.

They lack permission to act on what they see.

Incentives operate beneath every pattern we've examined—filtering truth, pricing action, redirecting escalation.

Frequently Asked Questions

For readers seeking quick answers about how incentives shape what gets taken seriously:

How do incentives shape what risks get taken seriously?

Incentives create an invisible filter. Risks that threaten tracked metrics, complicate committed narratives, or affect measured performance move quickly—they collide with what leadership is rewarded for protecting. Risks that threaten unmeasured things (resilience, second-order effects, long-term capacity) remain perpetually "important" but never urgent. The difference isn't severity—it's friction with the reward structure.

Why doesn't evidence alone make risks urgent?

Evidence matters, but alignment matters more. Well-documented risks that don't fit current priorities get translated through careful language: "emerging," "non-core," "outside current focus." The risk isn't rejected—it's filtered through incentive structures until it no longer lands with force. Organizations don't suppress truth; they shape how truth can be expressed to prevent collision with what's rewarded.

Why do previously-dismissed risks suddenly become urgent?

The risk hasn't changed—the incentive structure has. When organizational context shifts (external pressure, leadership change, strategic reprioritization), risks that seemed impractical become essential. Resource constraints dissolve, timing becomes urgent, obstacles disappear. This reveals that seriousness is negotiated through incentives rather than determined by evidence. The same risk can be simultaneously "not the right time" and "critical priority" depending solely on alignment with current rewards.

Next in the series: When Experience Becomes a Blindfold

We've traced how structure shapes failure: silence, decision fade, governance displacement, escalation erosion, and incentive filtering. The next post examines a subtler barrier—how the confidence that comes from pattern recognition can prevent us from seeing what no longer fits those patterns.

Subscribe to The Risk Philosopher to follow this 8-part series examining how organizational failure emerges from structure, not intent—and receive new posts on risk judgment and emerging risks.

Related Reading:

In This Series:

- Part 3: When Governance Becomes a Substitute for Judgment

- Part 4: Risk Escalation Fails Long Before Anyone Raises Their Voice

- Part 6: When Experience Becomes a Blindfold (Coming soon)

When incentives decide what can be taken seriously, organizations don't lack truth—they lack permission to act on it.