How Judgment Gets Quietly Outsourced

Part 7 - How organizational judgment gets delegated to decision-making tools and risk frameworks when exercising direct judgment becomes too costly—and why this automation feels like progress until critical failures emerge.

🌟 RISK JUDGMENT SERIES: When Risk Stops Behaving — Part 7 of 8

We've examined how silence becomes rational, decisions lose custody, governance displaces judgment, escalation erodes, incentives filter truth, and experience creates blindness. This post reveals the culmination: when all these mechanisms compound, organizations outsource judgment to tools and frameworks—not from lack of capability, but because exercising direct judgment has become too costly.

The next and final post examines what arrives when judgment has been outsourced: urgency without the capacity to act on it.

I've watched organizations buy increasingly sophisticated tools for decisions they used to make with judgment.

Risk scoring systems. AI-driven prioritization. Decision frameworks with twenty variables and weighted outputs.

Each one promised to improve decision quality. Make risk assessment more consistent. Remove human bias.

And they did—to a point.

But something else happened quietly: judgment didn't get better. It got relocated.

Not eliminated. Not replaced. Relocated—from people to process, from thinking to following, from weighing trade-offs to executing outputs.

When environments punish judgment—through misaligned incentives, accumulated failure from silence and erosion, experience-based blindness—organizations don't eliminate judgment. They outsource it.

To tools. To frameworks. To anything that makes the act of deciding feel less exposed.

This is the final mechanism we need to examine: how judgment gets quietly delegated to systems that can't actually exercise it—and why this feels like progress until the moment it fails.

Why Judgment Gets Delegated

Judgment requires exposure. Someone decides. Someone owns the outcome. Someone is visible when it goes wrong.

In environments where judgment has been repeatedly punished—where raising misaligned risks gets redirected, where experience creates confident blindness, where escalation erodes before it lands—delegating that exposure becomes rational.

Tools and frameworks offer relief.

They provide structure where uncertainty feels overwhelming. They create consistency where human judgment varies. They distribute accountability where individual exposure feels dangerous.

Most importantly: they make decisions feel defensible even when they're wrong.

I once watched a risk committee defer a judgment call to a scoring model. The model rated a known structural vulnerability as "medium" because it didn't fit the quantifiable variables. The committee knew the rating missed essential context—but the model's output provided cover.

If the decision later proved wrong, the explanation was ready: "We followed the framework." No one's judgment was exposed. No one had to defend their thinking. The process absorbed the accountability.

"This isn't incompetence. It's adaptation to an environment that punishes exercising judgment while rewarding adherence to process."

The delegation feels like rigor. It looks like improvement. What it actually represents is judgment becoming too costly to exercise directly.

When Tools Become Cover

Tools and frameworks aren't problems themselves. They're essential for managing complexity at scale.

The problem emerges when tools shift from supporting judgment to substituting for it.

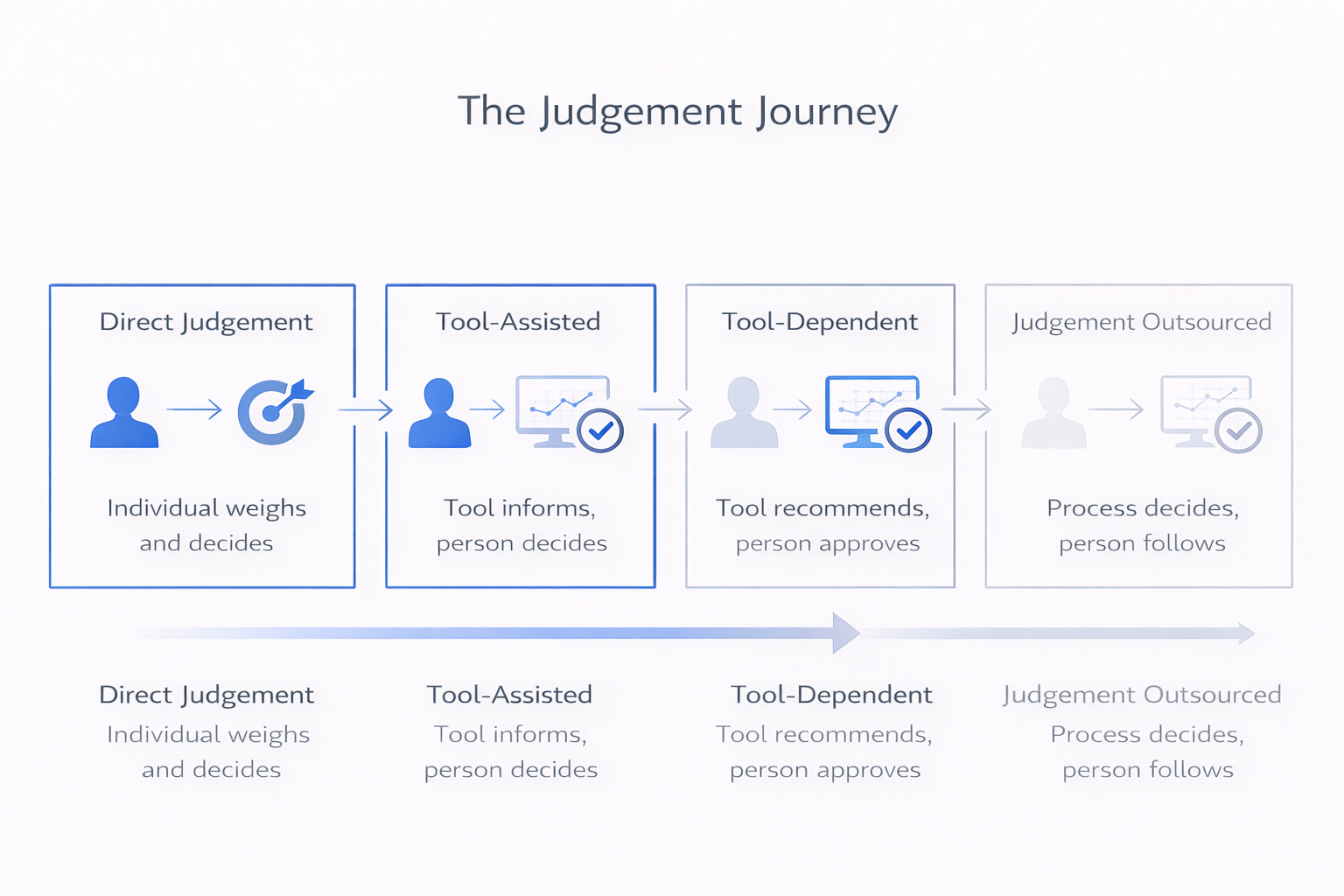

This happens gradually:

First, the tool is introduced to assist. It provides data, structure, comparison. Judgment still interprets and weighs.

Then, as the environment continues to punish exposed judgment, the tool's outputs start being treated as conclusions rather than inputs.

"What does the model say?" becomes "What should we do?"—and the gap between those questions disappears.

The tool that was meant to inform judgment now circumvents it. And because the tool's logic is often opaque ("the algorithm determined..."), questioning its output feels like questioning expertise itself.

I've seen this with AI-driven risk prioritization systems. They're trained on historical data—which means they're excellent at recognizing patterns that have already materialized. But they struggle with novel risks, second-order effects, and situations where context matters more than quantifiable variables.

Yet when the output appears precise (scores to two decimal places, confidence intervals, ranked priorities), it carries an authority that makes judgment feel like opinion in comparison.

The result: organizations stop weighing. They start following.

And when something outside the model's training emerges, the system can't adapt—because the judgment that would recognize the anomaly has been quietly delegated away.

The Illusion of Distributed Accountability

One of the most seductive aspects of outsourcing judgment is that it appears to distribute accountability.

The tool was developed by experts. The framework was approved by leadership. The process was reviewed by governance. Everyone contributed. Everyone signed off.

But distributed accountability is not the same as maintained accountability.

When judgment is outsourced, responsibility doesn't spread—it dissolves.

"Everyone was involved. No one was responsible."

When something fails, no one was negligent. The process was followed. The tool was applied correctly. The framework was adhered to.

This is the organizational version of what we've seen throughout this series:

How silence becomes rational when individual exposure is asymmetric.

How decisions lose custody when ownership disperses across committees.

How governance displaces judgment when process absorbs anxiety.

How escalation erodes when responsibility becomes collective.

How incentives filter truth when raising misaligned risks is costly.

How experience creates blindness when pattern recognition generates certainty.

Judgment outsourcing is the culmination: when all these mechanisms compound, exercising direct judgment becomes so difficult that delegating it to process feels like the only viable path.

The accountability appears distributed. In practice, it has evaporated.

What Quietly Gets Lost

When judgment is outsourced, certain capacities disappear—not visibly, but functionally.

Judgment involves weighing competing considerations that don't resolve into a formula. It requires holding uncertainty without collapsing it prematurely into clarity. It depends on recognizing when rules don't apply and context overrides precedent.

Tools can't do this.

A risk scoring framework might rate supply chain concentration and geopolitical instability separately—each assessed, each weighted, each scored.

But it can't capture the interaction: how concentration amplifies geopolitical risk in ways that don't appear in either variable alone.

That recognition requires judgment. The kind that holds multiple considerations simultaneously and notices what emerges from their combination.

When judgment is outsourced, this integrative capacity disappears—not because the tool is flawed, but because the task exceeds what tools can do.

What remains is precision without wisdom. Consistency without adaptability. Defensibility without responsibility.

Organizations appear to be deciding. In practice, they are following.

Why Outsourcing Feels Like Progress

None of this is visible when it's happening.

Judgment outsourcing feels like maturity. Like sophistication. Like the natural evolution of organizational capability.

The tools are impressive. The frameworks are rigorous. The process is documented.

It creates coherence where uncertainty would otherwise dominate. And in environments that have systematically punished exercising judgment—through all the mechanisms we've examined—outsourcing it feels like relief.

The exposure is gone. The defensibility is present. The process is clear.

Until the moment arrives when the tool can't answer the question being asked—and the judgment needed to recognize that has been delegated away.

📌 Key Takeaways:

- 1️⃣ When environments punish judgment through misaligned incentives and accumulated failures, organizations outsource it to tools and frameworks

- 2️⃣ Judgment outsourcing progresses gradually—tools shift from assisting decisions to substituting for them

- 3️⃣ Tool outputs carry authority (precision, confidence intervals) that makes direct judgment feel like opinion in comparison

- 4️⃣ Distributed accountability through process creates the illusion of responsibility while actually eliminating it

- 5️⃣ Tools can't exercise judgment—they can't weigh context, recognize novel interactions, or adapt when reality doesn't fit their models

Tools don't eliminate judgment.

They relocate it.

And when judgment has been relocated to systems that can't exercise it, organizations don't fail because they lack expertise.

They fail because the expertise they have can no longer reach the decisions that matter.

When judgment has been outsourced to systems that can't exercise it, organizations reach the final stage of this progression.

Frequently Asked Questions

For readers seeking quick answers about how judgment gets quietly delegated to tools and frameworks:

Why do organizations outsource judgment to tools and frameworks?

Because exercising direct judgment has become too costly. In environments where incentives punish misalignment, where escalation erodes, and where experience creates blindness, individual judgment exposure feels dangerous. Tools offer relief: they provide defensibility ("we followed the framework"), distribute accountability (no one person is exposed), and create consistency. The delegation feels like rigor and looks like improvement—but it represents judgment becoming too difficult to exercise directly.

What's wrong with using tools to support decision-making?

Nothing—tools are essential for managing complexity. The problem emerges when tools shift from supporting judgment to substituting for it. This happens gradually: "What does the model say?" becomes "What should we do?" When tool outputs are treated as conclusions rather than inputs, the judgment that would recognize anomalies, weigh context, or notice novel interactions gets delegated away. Tools can't exercise judgment—they can't adapt when reality doesn't fit their models.

How does judgment outsourcing lead to failure?

Organizations don't fail from lack of expertise—they fail because expertise can no longer reach decisions that matter. When judgment has been outsourced to tools, and those tools encounter situations they weren't designed for (novel risks, interaction effects, context-dependent decisions), there's no judgment available to recognize the limitation. The system followed its process, produced its output, and everyone acted in good faith—but the capacity to weigh, adapt, and question had been quietly delegated away.

Next in the series: When Seriousness Arrives Too Late

We've traced the complete progression: silence, decision fade, governance displacement, escalation erosion, incentive filtering, experience-based blindness, and judgment outsourcing. The final post examines the endpoint—when urgency finally arrives but without the capacity, ownership, or clarity needed to act effectively. This is how organizational failure completes itself.

Subscribe to The Risk Philosopher to follow this 8-part series examining how organizational failure emerges from structure, not intent—and receive new posts on risk judgment and emerging risks.

Related Reading:

In This Series:

- Part 5: How Incentives Quietly Shape What Gets Taken Seriously

- Part 6: When Experience Becomes a Blindfold

- Part 8: When Seriousness Arrives Too Late (Coming soon)

When judgment becomes too costly to exercise, outsourcing it doesn't solve the problem—it just makes the failure harder to locate.