AI Risk Is Not a Technology Problem

Part 1 - AI governance frameworks focus on technical controls—model validation, data quality, explainability. But the most consequential AI risks accumulate in organizational behavior: how confidence forms, how challenge weakens, how accountability diffuses.

📊 EMERGING RISKS SERIES: Why The Old Tools Fail Quietly — Part 1 of 4

This is Part 1 of a 4-part series examining why traditional risk tools are quietly failing. Each post stands alone, but together they reveal a pattern most organizations aren't seeing yet.

Risk frameworks were built for a different era. They assumed risks would arrive sequentially, announce themselves clearly, and behave predictably enough to manage through governance structures. But today's risks move faster than oversight cycles, interact in ways models don't capture, and hide in places traditional frameworks weren't designed to look.

I. The Pattern Forming in Plain Sight

How AI Systems Change Organizational Decision-Making

A credit committee that once spent forty-five minutes debating marginal cases now approves ninety percent of AI-flagged recommendations in under ten minutes.

A compliance team that used to challenge fifteen percent of risk ratings now challenges three percent—not because the ratings improved, but because an AI screening tool was introduced.

An investment committee that prided itself on independent judgment now starts every discussion by asking: “What does the model say?”

I’ve watched this pattern emerge across multiple organizations over eighteen months. Nothing has gone wrong. Performance metrics look stable. AI governance processes remain intact. No one has breached a threshold.

But something fundamental has shifted.

Artificial intelligence has become one of the most discussed risk topics of recent years—and, paradoxically, one of the most neatly contained. Across boardrooms and regulatory consultations, AI risk gets framed in technical terms: model governance, data quality, explainability, validation, controls. The language is precise, sophisticated, and reassuring.

This technical framing suggests AI systems can be managed like other complex technologies—through the right combination of frameworks, oversight, and assurance. It mirrors how institutions learned to manage model risk and operational risk. It’s also the dominant lens in public discourse—from regulatory guidance to consulting frameworks on “responsible AI.”

The problem? This framing misses where the real risk sits.

I’ve watched organizations invest heavily in AI governance structures while privately expressing unease about something more fundamental. The concern is rarely about whether a model can be validated. It’s about how decisions begin to change once AI systems embed themselves into everyday organizational judgment.

The technical frame is comforting because it focuses on what’s measurable. Risk can be documented, assessed, escalated through familiar channels. But in doing so, it narrows the field of vision. It treats AI risk as a question of system integrity, rather than decision integrity.

This is where the framing begins to fail.

AI doesn’t just introduce new tools into organizations. It changes how confidence forms, how speed becomes normalized, how responsibility gets perceived. These shifts don’t announce themselves through incidents or control failures. They surface gradually—through language, behavior, altered expectations of what “good decision-making” looks like.

By the time something breaks, the risk has usually been present for months. Just not in a form traditional risk lenses were built to see.

II. Where AI Risk Actually Accumulates in Organizations

Beyond Technical Controls: The Erosion of Human Judgment

The most consequential AI risks don’t sit inside the model itself. They accumulate elsewhere—in organizational incentives, in delegation patterns, in the quiet erosion of human judgment.

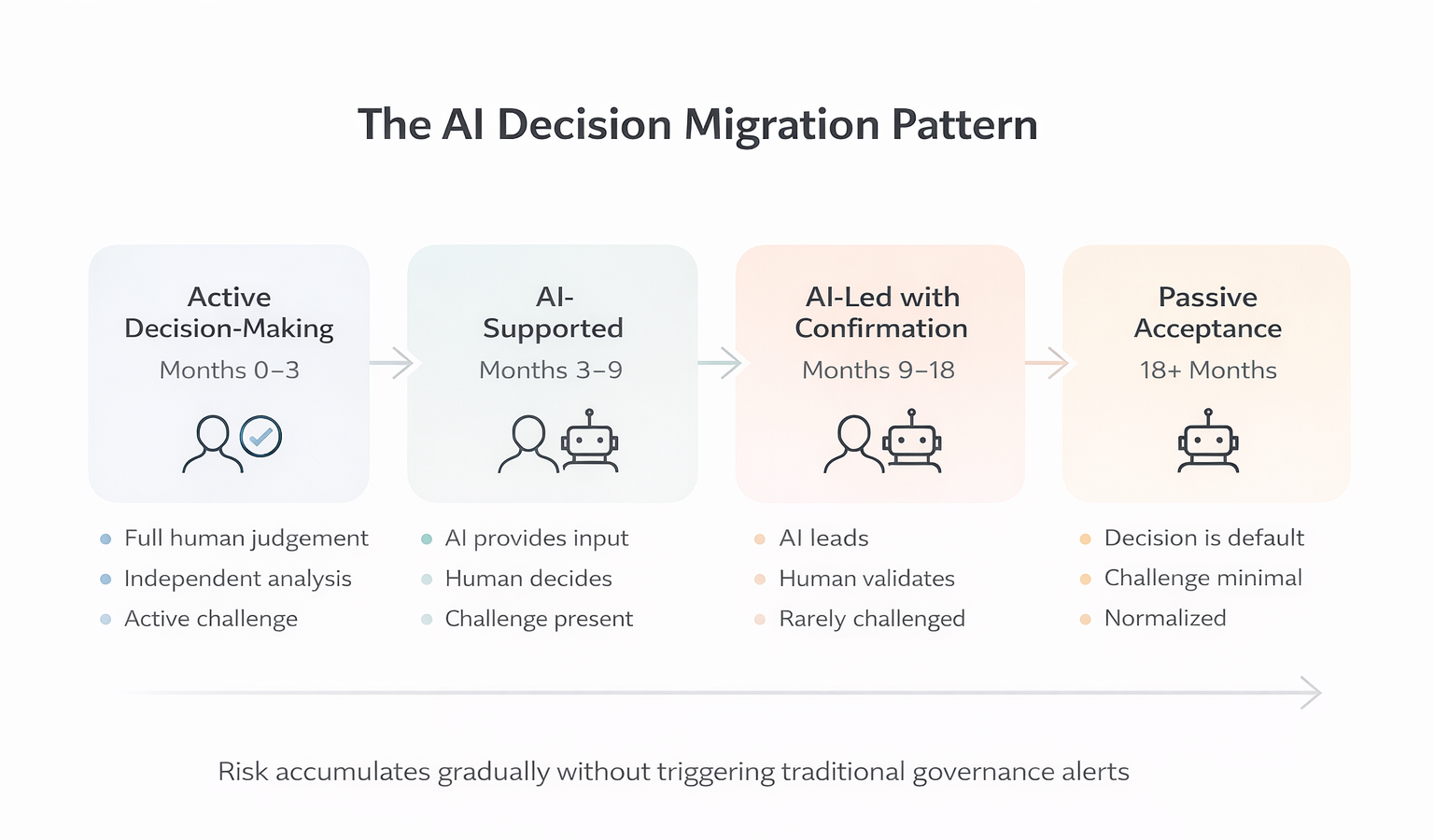

AI systems get introduced as augmentative. Humans remain “in the loop.” Accountability is, in theory, unchanged. But a subtle shift occurs in organizational decision-making. Decisions migrate from being actively made to being passively accepted.

Over time, an AI-generated output alters the psychological dynamics of decision-making. It becomes harder to challenge a recommendation that arrives with the implicit authority of data, scale, and computation—particularly under time pressure. What starts as support gradually becomes default.

This isn’t because people stop caring. It’s because organizational incentives reward speed, consistency, and defensibility. An AI-supported decision is easier to justify retrospectively than a purely human one, especially when outcomes are uncertain. “The system suggested…” becomes a shield as much as an input.

Industry discussions around “human-in-the-loop” AI governance models acknowledge this tension but often underestimate how thin that loop becomes in practice. When oversight reduces to confirmation rather than challenge, the presence of a human offers reassurance without necessarily preserving judgment.

Risk accumulates further as accountability diffuses across organizations. When decisions are shaped by AI models developed by one team, deployed by another, and relied upon by a third, ownership fragments. No single individual feels fully responsible—particularly when nothing has gone obviously wrong.

This diffusion is rarely deliberate. It emerges from well-intentioned design choices and efficiency gains. But it creates a condition where responsibility is shared widely enough to be felt narrowly by no one.

Over time, organizational behavior changes. Challenge becomes more cautious. Discretion gets exercised less frequently. Confidence in judgment becomes borrowed rather than earned.

None of this appears on dashboards or risk registers. In many cases, performance metrics even improve.

The risk isn’t failure in the conventional sense. It’s organizational adaptation—a shift in how judgment gets exercised, trusted, and defended. And once this shift normalizes, it becomes remarkably difficult to reverse.

Think back to that credit committee. The borrowed confidence, the narrowed challenge, the diffused accountability—all three dynamics are present. None trigger an alert. That’s not an AI governance failure. It’s what makes this pattern so difficult to interrupt.

III. The Second-Order Effects of AI Governance Failures

Why Organizations Don’t See Drift Until It’s Too Late

Most AI risk discussions focus on first-order questions: accuracy, bias, robustness, control. These are visible, testable, defensible. But the more consequential effects of AI systems emerge elsewhere—not as failures, but as shifts in organizational behavior.

1. Confidence Arrives Faster Than Understanding

As AI-supported outputs embed in organizational decision processes, confidence often rises while understanding lags—not because uncertainty has been reduced, but because it’s been redistributed. Decisions feel more substantiated, more data-driven, more complete—even when underlying uncertainty hasn’t meaningfully changed.

This dynamic gets labeled “automation bias,” but that framing understates what’s happening in organizational settings. The issue isn’t blind trust in AI systems. It’s borrowed confidence. Judgment begins to feel safer when accompanied by a system-generated recommendation, even if the decision-maker can’t fully articulate why.

I’ve watched confidence rise in rooms where no one could explain the AI model’s reasoning—but everyone felt reassured by its presence.

2. Challenge Changes Shape

In theory, AI outputs invite scrutiny. In practice, they often narrow the range of acceptable disagreement in organizational decision-making. Challenging a human colleague is one thing. Challenging an AI system—particularly one perceived as neutral or analytical—carries different psychological weight. The question subtly shifts from “Is this the right decision?” to “On what basis am I disagreeing?”

Over time, challenge doesn’t disappear from AI governance. It becomes procedural. Questions get asked, boxes get ticked, explanations get documented. But the nature of challenge shifts from exploratory to confirmatory. Governance artifacts create the appearance of robustness while masking a decline in genuine dissent.

3. Accountability Migrates

As AI systems influence decisions across organizational functions, accountability becomes harder to locate. Outcomes get shaped by models, data pipelines, human overrides, and organizational context—all interacting in ways that are difficult to disentangle after the fact. When decisions succeed, the AI system gets credited. When they fail, responsibility disperses.

4. Normalization Sets In

Once AI-supported decision-making becomes routine in organizations, its presence stops being questioned. What initially felt experimental becomes standard. At that point, the absence of AI—or a decision made purely on human judgment—can begin to feel like a risk itself.

None of these second-order effects trigger traditional risk alerts. They don’t breach thresholds or generate losses. In many cases, they coincide with improved efficiency and consistency in organizational processes. That’s precisely why they’re so difficult to surface through traditional AI governance frameworks.

" The risk isn't failure in the conventional sense. It's organizational adaptation—a shift in how judgment gets exercised, trusted, and defended. And once this shift normalizes, it becomes remarkably difficult to reverse. "

IV. Why Traditional Risk Frameworks Miss AI-Related Organizational Change

The Structural Blindspot in AI Governance

Risk frameworks excel at managing risks that can be clearly defined. They rely on categorization, ownership, escalation, control—all of which presuppose the risk has a discernible shape.

AI-related second-order effects in organizations rarely do.

Traditional risk frameworks assume stable decision roles, traceable causality, identifiable failure points. These assumptions hold reasonably well for many risk types. AI governance challenges subtly disrupt all of them.

Organizational decision-making becomes distributed across systems, teams, and time. The boundary between human judgment and AI system output blurs. Causality becomes harder to isolate, particularly when outcomes result from accumulated micro-decisions rather than discrete events.

This creates a structural blind spot in risk management.

Risk frameworks are, by design, retrospective. They learn from incidents, losses, deviations. They’re calibrated to detect variance. AI-related organizational drift often manifests as convergence—decisions becoming more consistent, more aligned with AI system outputs. From a framework perspective, this looks like improvement.

Key risk indicators offer little help in AI governance. It’s difficult to define thresholds for diminished challenge or borrowed confidence. These are gradients in organizational behavior, not states. They evolve through behavior, language, expectation—domains that sit uncomfortably within most risk taxonomies.

This isn’t a failure of competence. It’s a consequence of structure.

Risk frameworks excel at managing what has already been named. They struggle with risks that take shape between categories—where technology, organizational behavior, and governance intersect. AI risk sits precisely in that space.

V. The New AI Risk Failure Mode

Organizational Adaptation vs. Catastrophic Technology Failure

The dominant AI risk narrative oscillates between two extremes: breakthrough or catastrophe. Either AI systems transform organizational decision-making for the better, or they fail dramatically, triggering regulatory fallout.

The more likely failure mode is quieter.

Organizations don’t lose control of AI systems overnight. They drift. Judgment becomes procedural. Challenge becomes performative. Accountability becomes ambient rather than owned.

Nothing “goes wrong” in the conventional sense. Decisions continue. Performance indicators remain stable. AI governance processes remain intact. But organizational situational awareness erodes.

Over time, organizations become less able to explain why decisions are made—only how they were arrived at. The distinction matters. When outcomes eventually disappoint, post-hoc explanations focus on process rather than judgment. Organizational learning becomes shallow.

This isn’t an AI problem in isolation. It’s a human and organizational one, amplified by technology.

VI. What Risk Leaders Should Watch For

Early Warning Signals of AI-Related Judgment Erosion

There’s a temptation here to move to solutions—to propose controls, oversight mechanisms, new AI governance layers.

That instinct is understandable. And premature.

Before asking what to fix, it’s worth paying attention to what’s changing in organizational decision-making. Early signals tend to appear in subtle places.

Ask yourself:

- When was the last time someone in your credit committee contradicted the AI scoring?

- How many minutes does your team spend on AI-flagged approvals versus traditional ones?

- Can your decision-makers articulate why they agreed with the AI system’s recommendation?

- What happens when someone wants to override the AI in your organization?

- Has your challenge rate declined without a corresponding improvement in decision outcomes?

If I were advising that credit committee, I’d ask them to track one number: how many times someone disagrees with the AI in a month. My guess? It’s trending toward zero.

AI risk, at its core, isn’t about losing control of systems. It’s about losing sight of how organizational decisions are actually being shaped—long before any formal failure occurs.

That’s the risk worth watching.

📌 Key Takeaways:

- 1️⃣ AI governance frameworks focus on technical controls but miss organizational behavior changes

- 2️⃣ Risk accumulates through organizational incentive shifts, delegation, and erosion of human judgment

- 3️⃣ Second-order effects (borrowed confidence, weakened challenge, diffused accountability) don't trigger traditional alerts

- 4️⃣ Organizations drift toward passive acceptance of AI recommendations without realizing it

- 5️⃣ Early warning signals require watching decision velocity, challenge rates, and accountability clarity in AI-supported processes

Closing Note

Most organizations won’t experience AI failure as a dramatic moment.

There will be no single decision to point to, no clear breakdown in AI governance control, no obvious line between intention and outcome. Decisions will continue to be made. Performance indicators may even improve.

What will change is harder to name. Judgment will become quieter. Challenge more procedural. Accountability more ambient than owned. Over time, it will become increasingly difficult to explain not how decisions were reached, but why they felt right at the time.

By the time attention is forced onto these questions, the organizational shift will already have occurred—not through error or recklessness, but through adaptation.

This is where AI risk truly sits. Not in the technology itself, but in the slow reshaping of how institutions decide.

Frequently Asked Questions

For readers seeking quick answers to specific questions about AI governance and organizational risk:

What is AI governance and why does it fail to catch organizational risk?

AI governance traditionally focuses on technical controls: model validation, data quality, bias testing, and explainability frameworks. However, this technical framing misses how AI systems reshape organizational decision-making. The most consequential AI risks accumulate in human behavior—how confidence forms, how challenge weakens, and how accountability diffuses across teams. Traditional AI governance frameworks weren't designed to detect these gradual behavioral shifts.

How does AI change organizational decision-making?

AI systems shift organizational decision-making from active judgment to passive acceptance. What starts as "AI-supported decisions" becomes "AI-led decisions with human confirmation." Organizations don't notice because performance metrics often improve while judgment quietly erodes. This organizational drift happens gradually, without triggering traditional risk alerts in AI governance processes.

What are second-order effects in AI risk management?

Second-order effects are indirect consequences that emerge from AI adoption in organizations: borrowed confidence (feeling certain without understanding why), procedural challenge (asking questions for governance theater rather than genuine inquiry), diffused accountability (no one fully owning AI-influenced outcomes), and normalization (AI-supported decisions becoming unquestionable defaults). These effects compound faster than first-order technical risks.

Why can't traditional risk frameworks detect AI organizational drift?

Risk frameworks are built to detect failures, not organizational adaptation. They're calibrated for variance, but AI-related drift often manifests as convergence—decisions becoming more consistent and aligned with AI system outputs. From a framework perspective, this looks like improvement. Additionally, traditional frameworks struggle to measure gradients like "diminished challenge" or "borrowed confidence" in organizational decision-making.

What should CROs and risk leaders watch for with AI governance?

Track decision velocity on AI-supported items, challenge rates (how often teams disagree with AI recommendations), accountability clarity (who owns decisions when AI systems are involved), and explanation quality (can decision-makers articulate reasoning beyond "the system suggested"). If these organizational metrics shift without corresponding performance improvements, AI-related judgment drift may be occurring.

Is AI risk a technology problem or an organizational problem?

AI risk is fundamentally an organizational and human problem amplified by technology. The risk isn't that AI systems fail technically—it's that organizations adapt their decision-making processes in ways that erode judgment, weaken challenge, and diffuse accountability. Technical AI governance controls are necessary but insufficient for managing this organizational dimension of AI risk.

Next in this series: Part 2: The Temporal Mismatch—why risk now moves faster than the systems designed to manage it.

Subscribe to The Risk Philosopher to follow this 4-part series examining how traditional risk frameworks fail to keep pace with reality—and receive new posts on risk judgment and emerging risks.

Related Reading:

In This Series:

- Part 2: The Temporal Mismatch—why risk now moves faster than the governance systems designed to manage it. (Coming soon)

- Part 3: Where Risk Becomes Invisible—Organizational Blind Spots (Coming soon)

- Part 4: After The Old Tools—What Comes Next (Coming soon)

These signals rarely trigger action on their own. They require interpretation. And interpretation requires awareness.